Latest AI News

OpenAI adds open source tools to help developers build for teen safety

OpenAI said Tuesday it is releasing a set of prompts that developers can use to make their apps safer for teens. The AI lab said the set ofteen safety policiescan be used with its open-weight safety model known asgpt-oss-safeguard. Rather than working from scratch to figure out how to make AI safer for teens, developers can use these prompts to fortify what they build. They address issues like graphic violence and sexual content, harmful body ideals and behaviors, dangerous activities and challenges, romantic or violent role play, and age-restricted goods and services. These safety policies are designed as prompts, making them easily compatible with other models besides gpt-oss-safeguard, though they’re probably most effective within OpenAI’s own ecosystem. To write these prompts, OpenAI said it worked with AI safety watchdogs Common Sense Media and everyone.ai. “These prompt-based policies help set a meaningful safety floor across the ecosystem, and because they’re released as open source, they can be adapted and improved over time,” said Robbie Torney, head of AI & Digital Assessments at Common Sense Media, in a statement. OpenAI noted in itsblogthat developers, including experienced teams, often struggle to translate safety goals into precise, operational rules. “This can lead to gaps in protection, inconsistent enforcement, or overly broad filtering,” the company wrote. “Clear, well-scoped policies are a critical foundation for effective safety systems.” OpenAI admits that these policies aren’t a solution to the complicated challenges of AI safety. But it builds off its previous efforts, including product-level safeguards such as parental controls and age prediction. Last year, OpenAIupdated guidelinesfor its large language models — known asModel Spec— to tackle how its AI models should behave with users under 18. OpenAI doesn’t have the cleanest track record itself, however. The company is facingseveral lawsuitsfiled by the families of people who died by suicide after extreme ChatGPT use. These dangerous relationships often form after the user eclipses the chatbot’s safeguards, and no model’s guardrails are fully impenetrable. Still, these policies are at least a step forward, especially since it can help indie developers.

View

Google TV’s new Gemini features keep fans updated on sports teams and more

Googleunveiledthree Gemini-powered features for Google TV on Tuesday, including AI-powered visual responses, the ability to deep dive into virtually any topic, and narrated overviews of sports games. A particularly noteworthy addition is the introduction of visual responses. For example, requesting the current score for the Warriors game will result in live scorecards, alongside information on where to view the game. Users can also search for recipes, and Gemini will complement its response with relevant video tutorials. As showcased atCES 2026, Google TV is also getting “deep dives.” This feature enables users to explore complex topics in greater detail. When prompted, Gemini offers narrated visual breakdowns on all sorts of subjects, such as health and wellness, economics, and technology. For instance, users could ask, “What are the effects of cold plunging?” Users can initiate these deep dives by selecting “Dive deeper” in the response options or by navigating to the Gemini tab on the home screen and selecting the “Learn” option. For sports fans, Gemini has launched “sports briefs.” This is for viewers who wish to stay updated on their favorite leagues without having to watch every live moment. Users can request timely narrated overviews of events in leagues such as the NBA, NHL, and MLB, making it easy to catch up on highlights and important updates. This comes a year after Google launched “news briefs” for viewers looking to stay informed on the latest headlines. These features are currently being rolled out to users in the U.S. and Canada. Google has also indicated plans to expand Gemini’s capabilities to Australia, New Zealand, and the U.K. this spring, with additional countries set to follow. Gemini firstlaunchedon Google TV in September 2025, but it was a limited release for select TCL televisions. Since then, it has expanded to more hardware and received several updates, including the ability to adjust settings through natural language, such as fixing dim screens or audio imbalances, making it a faster option than going to the menu. Users can also search their Google Photos library by voice and apply AI styles and effects.

View

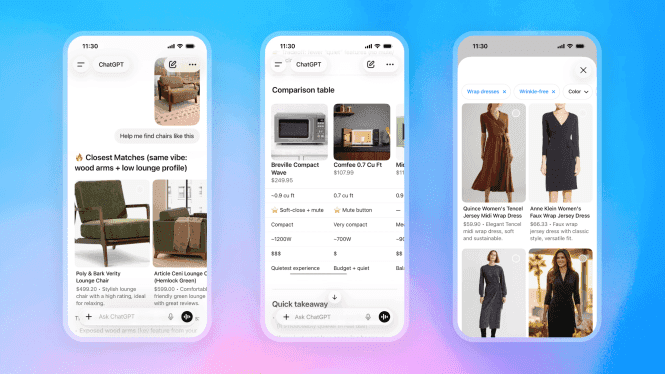

OpenAI’s plans to make ChatGPT more like Amazon aren’t going so well

OpenAI’s plans to make ChatGPT into an e-commerce hub aren’t exactly panning out—at least, not yet. In anannouncementon Tuesday, the company revealed that it’s pivoting away from a recently launched feature that let users buy items directly from the chatbot’s interface. OpenAI originallylaunched buyingcapabilities in ChatGPT last year—positioning itself as a “shopping assistant” that could connect consumers to relevant vendors. A feature called “Instant Checkout” launched in Septemberand encouraged users to talk with the chatbot about what they were looking to buy and, much like a traditional e-commerce site, add products to a checkout cart within ChatGPT itself. The items were purchased from the vendors themselves, but ChatGPT acted as a portal for those purchases. Instant Checkout has not been a huge success, however, because, on Tuesday—amidst other update announcements relevant to ChatGPT’s shopping experience—OpenAI said that it would be scaling back the feature. “We’ve found that the initial version of Instant Checkout did not offer the level of flexibility that we aspire to provide, so we’re allowing merchants to use their own checkout experiences while we focus our efforts on product discovery,” the company explained in a blog post. OpenAI clarified to TechCrunch that merchants would still have the option of incorporating the feature for the time being through apps within ChatGPT. An OpenAI spokesperson said that the company would be deprioritizing the development of Instant Checkout as a standalone feature and that, instead, it planned to prioritize the development of product discovery for consumers. OpenAI would continue to support a variety of checkout paths, including through merchants’ own websites, they said. The InformationandCNBC had previously reportedthat OpenAI’s new plan was for merchants to create their own apps within ChatGPT, which would then route users to checkout experiences at the merchants’ respective websites. A source who spoke with The Information noted that ChatGPT users simply “weren’t using the chatbot to actually help them make purchases,” anda studyfrom October that looked at referral traffic from ChatGPT found that e-commerce sites were not making much money from ChatGPT users. Instead of transforming ChatGPT into a shopping portal, what OpenAI is doing now is crafting the chatbot into a centralized hub of consumer information. That way, online shoppers will see it as a kind of intermediary research tool that can help them decide what product to ultimately buy. This shopping experience is powered by its Agentic Commerce Protocol (ACP), which is its open standard for e-commerce, which the company developed in partnership with fintech giant Stripe. The protocol utilizes data provided by participating merchants. Going forward, OpenAI said that ChatGPT would provide more detailed information about products—showcasing side-by-side pictures, while also providing other comparative metrics for each item—like prices, features, and reviews.

View

Arm is releasing its first in-house chip in its 35-year history

Storied semiconductor and software company Arm Holdings is starting to make its own chips after nearly 36 years of licensing its designs to companies like Nvidia and Apple. At an event Tuesday in San Francisco, the company revealed theArm AGI CPU, a production-ready chip built for running inference in an AI data center. The UK-based company developed the chip using its Arm Neoverse family of CPU IP cores and through a partnership with Meta. Meta is also the chip’s first customer of the Arm AGI CPU, which is designed to work harmoniously with the tech company’s training and inference accelerator. Arm also counts OpenAI, Cerebras, and Cloudflare, among others, as launch partners. Arm’s transition to making its own silicon has been anticipated for some time. The company started developing the chips back in 2023, according toCNBC reporting, and the processors are already ready to order. TechCrunch reached out to Arm for more information regarding the timeline of the chip’s development and release. While it might have been expected, the move is a historic deviation from Arm’s long tradition of exclusively licensing its designs to other chipmakers. The company, which is majority owned by Japanese conglomerate Softbank Group, will now be competing alongside many of its partners. The fact that Arm is producing a CPU, as opposed to GPU, is also notable. GPUs, or graphics processing units, have drawn a lot of attention because they are used to train and run AI models. CPUs are an equally important part of a data center rack. In its pro-CPU pitch, Arm notes that these chips manage thousands of distributed tasks, including managing memory and storage, scheduling workloads and moving data across systems. The CPU has become the “pacing element of modern infrastructure — responsible for keeping distributed AI systems operating efficiently at scale,” the company said. This puts new demands on CPUs and requires an evolution of the processor, Arm said. CPUs are also becoming harder to come by. In March, Intel and AMD told their customers in China thatwait times for their products would be longer due to CPU shortages, Reuters originally reported.Computer prices have also started to riseamid the growing shortage.

View

Sarvam in Talks to raise up to $250mn from NVIDIA, Accel and HCLTech: Report

If completed, the deal would be the biggest investment to date in a pure-play Indian AI company.

View

For the First Time Ever, Arm Has Built a Chip of Its Own

Arm unveils a data centre CPU in collaboration with Meta.

View

Anthropic’s Claude Can Now Use Your Computer to Complete Tasks

Anthropic introduced a new artificial intelligence (AI) feature for Claude on Monday. Part of the AI platform's Computer Use capability, the feature allows the chatbot to control the user's PC and complete tasks autonomously. The capability is currently available as a research preview and can be accessed via its Claude Code or Claude Cowork function. The feature uses agentic AI to not only work with connected apps but also legacy apps via a dedicated virtual keyboard, mouse, and the ability to read the content on the screen using screenshots.

View

Agile Robots becomes the latest robotics company to partner with Google DeepMind

Agile Robots has landed a partnership with Google DeepMind to develop robots with the artificial intelligence research lab, the latest in a string of robotics company to do so. Munich, Germany-based Agile Robots announced it entered into a strategic research partnership with Google DeepMind on Tuesday. The partnership involves Agile Robots implementing Google DeepMind’s Gemini Robotics foundation models into its bots and the data being collected by the robots being used to improve the underlying Gemini AI models. The companies will work together to test, fine tune, and deploy robots that use Gemini foundation models in industrial use cases across sectors including electronics manufacturing, automotive, data centers, and logistics. “Agile Robots has already installed over 20,000 robotics solutions worldwide, proving intelligent automation at scale,” Zhaopeng Chen, the co-founder and CEO of Agile Robots, said in the deal’s press release. “The huge opportunity ahead lies in autonomous, intelligent production systems that can transform entire industries. Integrating Google DeepMind’s Gemini Robotics models into our robotic solutions positions us at the cutting edge of this rapidly growing market.” A spokesperson said the deal was longterm but declined to share further details about duration or pricing. Agile Robots was founded in 2018 and has raised more than $270 million in venture capital funding from investors including the SoftBank Vision Fund, Chinese hardware company Xiaomi, and Midas Group, among others. It’s just the latest robotics hardware company to land a partnership with Google DeepMind to advance its tech. Earlier this year, Hyundai-owned Boston Dynamics, the maker of the famous dog-like Spot robot, announced that it wasentering a partnership with Google DeepMindto use the company’s AI foundation models to help develop its upcoming humanoid robot Atlas. Boston Dynamics was previouslyowned by Googlefrom 2013to 2017. Broadly, robotic partnerships are on the rise this year. German robotics startupNeura Robotics announced a partnership with Qualcommin early March that involves Neura Robotics using Qualcomm’s recently announced IQ10 processor series, designed for mobile robots and humanoids, as reference design for future robots. Robots are incredibly complicated on both the hardware and software side so these partnerships make a lot of sense. As companies work to develop bots that can operate autonomously, it makes sense for companies with a specific strong suit — whether that’s hardware, dexterity or software, to name a few — to partner with other companies that have different expertise. As many in the industry, including Nvidia CEO Jensen Huang, considerphysical AI to be the next frontier for the AI market, these partnerships will likely not only continue, but accelerate.

View

Mirage raises $75M to continue building models for its AI video editing app Captions

Mirage, the maker of video editing app Captions, has raised $75 million in growth financing from General Catalyst’s Customer Value Fund (CVF). Over the past year, the startup has made significant changes both to its product and corporate identity. The startup rebranded from Captions to Mirage to position itself as an AI lab that produces different models and also caters to industries like advertising and marketing. It has alsotrained a modelspecifically for pacing, framing and attention dynamics in short videos. The company also switched toa freemium model in January 2025to better compete with apps like ByteDance’s CapCut and Meta’s Edits, which was released later in the year. It now offers a video creation suite as well, with some of the features from Captions, that lets companies create and distribute videos in bulk. Mirage’s co-founder and CEO Gaurav Misra said that the company aims to create more models. However, he didn’t specify what its next set of models would do, only saying that they would be focused on “assembly intelligence” — basically putting together a video using different sources and components. Speaking about Mirage’s new audio model, which it claims can preserve accents in generated videos, Misra said, “The reason for the audio model was that we noticed that there was a gap in accents because a lot of our users are international. Accents are just very important. There was my own dad’s example. He was trying to use the app, and he would say a word in an Indian accent, and it would always make it sound like he’s talking in an American accent.” According to data from analytics firm AppFigures, Captions have been downloaded over 3.2 million times in the last 365 days and has brought in $28.4 million in in-app revenue. Misra said the platform has been used to create more than 200 million videos so far, and that it has attracted an international user base, with only 25% of its revenue coming from the U.S. Currently, Mirage’s marketing suite is available on the web, and Captions largely offers a mobile-first editing suite. The company aims to merge these two platforms to better target small businesses that may be looking to create marketing videos. Pranav Singhvi, managing director of General Catalyst’s CVF fund, said Mirage has great product-market fit., “Mirage’s business equation is extremely figured out. They know exactly how to spend that dollar and generate a very attractive ROI. If you think about the market they’re going after, it’s in a sense an infinite total addressable market. You can start out in the creator world, the influencer world, and then use that as a mechanism to sell to enterprises as well,” Singhvi told TechCrunch. There are tons of companies building AI video-generation pipelines for marketing. Canva has introducedseveral tools around marketing creation and tracking, while platforms likeD-ID,HeyGen,Webflow, andAvataarhave been releasing new models and features. However, Singhvi seems confident about Mirage’s positioning and unit economics. “Regardless of what the other tools are out there, Mirage is clearly ahead of the pack from a unit economics standpoint. Ultimately, it’s all a reflection of their product,” he said. Mirage aims to use the fresh capital to fuel growth, and expand in high-growth Asian markets.

View

BonV Aero, Israel’s ParaZero Partner to Bring Counter-Drone System to India

India’s BonV Aero has secured exclusive rights to deploy and locally manufacture DefendAir for defence and security agencies.

View

OpenAI Warns Microsoft Dependence a Risk Ahead of IPO Plans: Report

The disclosure forms part of the broader set of risk factors shared with investors during OpenAI’s latest fundraising round.

View

UP CM Clarifies ₹25,000 Cr MoU With Puch AI After Pushback

The startup previously entered into a similar agreement with the Andhra Pradesh government in October 2025.

View