Latest AI News

Cloudflare Makes Sandboxing AI Agents 100x Faster than Containers

The launch provides the infrastructure required for the mass deployment of autonomous agents that require low-latency, isolated execution of AI-generated code.

View

Anthropic Report Warns AI Skills Could Deepen Labour Market Divide

The report finds growing disparities in AI usage. In India, the data points to a strong tilt towards technical and productivity-driven use cases.

View

India’s Diversity is Forcing a New Layer of AI Engineering in Retail

The complexity of Indian retail data is a testing ground for Reliance-owned Fynd’s AI systems.

View

OpenAI’s Sora was the creepiest app on your phone — now it’s shutting down

OpenAI announced on Tuesday that it isshutting downSora, a TikTok-like social app thatlaunchedsix months ago. OpenAI did not give a reason for the shut down, nor did it share information about when it will officially be discontinued. When Sora first opened up as an invite-only social network, it seemed like everyone was clamoring for an invite. But like Meta’sHorizon Worlds— the company’s virtual reality social platform — which is also in turmoil despite once being central to the company’s infamous metaverse, Sora didn’t have real staying power. Though the underlying Sora 2 video- and audio-generation model is scarily impressive, there was not sustained interest in an AI-only social feed. We’re saying goodbye to the Sora app. To everyone who created with Sora, shared it, and built community around it: thank you. What you made with Sora mattered, and we know this news is disappointing.We’ll share more soon, including timelines for the app and API and details on… Sora was intended to function like an AI-first TikTok, cloning the recognizable vertical video feed interface. Its flagship feature, “cameos,” allowed people to scan their faces and make realistic deepfakes of themselves. These “cameos” could be made public, allowing anyone to make videos of their “cameo.” (Cameo took OpenAI to court over the name of this feature and prevailed, forcing the company to change it to “characters.”) In a turn of events that surprised literally no one, this glorified deepfake app was weird as hell. At launch, Sora felt like an under-moderated minefield ofcreepy Sam Altman videos. I will never be the same after watching a realistic clone of the OpenAI CEO walking through a slaughterhouse of fattened pigs and asking, “Are my piggies enjoying their slop?” Sora was not supposed to allow people to generate videos of public figures who did not explicitly opt-in, but it was all too easy to evade OpenAI’s guardrails. Sure enough,deepfakes of real peoplelike civil rights leader Martin Luther King, Jr. and actor Robin Williams emerged, prompting both of their daughters to go on Instagram and ask users to stop making videos of their deceased fathers. After making dozens of videos in which Sam Altman steals Nvidia chips from a Target, users shifted gears. Instead, they intentionally made content using copyrighted characters, inviting legal trouble for the man they loved to deepfake — we saw Mario smoking weed, Naruto ordering Krabby Patties, and Pikachu doing ASMR. This didn’t unfold as planned. Rather than sue, Disney, a notoriously litigious company, gave OpenAI a$1 billion investmentand a licensing deal that would have allowed Sora to generate videos featuring characters from Disney, Marvel, Pixar and Star Wars. It looked like a landmark moment for the AI industry. But with Sora gone, so is the deal — though notably, it appears no money actually changed hands before it collapsed. (Disney offered some polite words about the whole thing on Tuesday, telling the Hollywood Reporter it would “continue to engage with AI platforms” going forward.) The initial hype around Sora was real. The app peaked in November with about 3,332,200 downloads across the iOS App Store and Google Play, according to data from the mobile intelligence firm Appfigures. If the app continued to grow, then perhaps OpenAI would’ve kept it going, but that’s not what happened. By February, it declined to 1,128,700 downloads. That seems like a big number, until you remember that ChatGPT has900 millionweekly active users. In its lifetime, Appfigures estimates that Sora made about $2.1 million from in-app purchases, which allowed users to buy more video generation credits. It’s hard to imagine that the Sora app’s computing demands tipped the scales that much for a company that’s alreadyoperating at a huge loss, but the app was perhaps too much of a liability to keep around if it wasn’t even growing. When OpenAI launched the Sora app, I prepared for a world in which we could have the tools to make deepfakes of each other at our fingertips. While I rarely make TikToks, I felt obligated topost a PSAthat this scary tech was coming fast. It ended up getting over 300,000 views, which is not the norm for my often dormant TikTok account, but this news got a real reaction out of people. I never expected that it would only last six months. But just because Sora is gone doesn’t mean the threat went with it. The Sora 2 model is still available — it’s just tucked behind the ChatGPT paywall. And OpenAI is hardly alone in making this technology so accessible. It’s only a matter of time before the next social AI video app hits the market, and we’re inundated with another tsunami of clips in which Snow White storms the Capitol.

View

With $3.5B in fresh capital, Kleiner Perkins is going all in on AI

Kleiner Perkins, the prominent U.S. venture firm, announced on Tuesday that it raised $3.5 billion in fresh capital across two funds, a significant increase from the firm’s$2 billionfundraise less than two years ago. The firm, founded back in 1972, says it raised $1 billion for its 22nd early-stage venture fund, and $2.5 billion for a separate vehicle designed to fund late-stage growth businesses. The much larger capital haul is not a surprise. Over the last few years, Kleiner Perkins has managed to secure early stakes in a number of fast-growing AI startups, including Together AI, Harvey, and OpenEvidence. The firm is also an investor in Anthropic and SpaceX, two companies expected to IPO this year. At a time when exits are few and far between, Kleiner Perkins alsorealized significant returnsfrom last year’s IPO of Figma, a design software company whose $25 million Series B round it led in 2018. The firm alsoreportedly scoreda decent return when its portfolio company Windsurf was acqui-hired by Google last summer. A firm famous for its legendary early bets on Amazon and Google, Kleiner Perkins now operates with a lean team of just five partners. The firm has seen some leadership turnover recently: Ev Randle departed for rival firm Benchmark, while Annie Case has transitioned from partner to an advisory role, a Kleiner Perkins spokesperson confirmed. Kleiner Perkins joins a wave of mega-raises from other VC firms. Thrive Capital recently secured$10 billionin fresh commitments, while General Catalyst isreportedly targetinga similar amount. Meanwhile, anSEC filingconfirmsTechCrunch’s earlierreporting that Founders Fund has closed $6 billion for its fourth growth vehicle.

View

Databricks bought two startups to underpin its new AI security product

With an overflowing war chest from its$5 billion raise that closed last month(not to mention billions in revenue), Databricks is acquiring. The company, best known for its cloud data analytics platform,announcedon Tuesday that it was launching a new security product called Lakewatch. Lakewatch takes Databricks’ ability to store massive amounts of data and performs classic Security Information and Event Management (SIEM) tasks, like detecting and investigating threats. Only it does so with the help of AI agents powered by Anthropic’s Claude. Databricks bought two startups to underpin this new product: Antimatter, in an undisclosed-until-now deal that closed last year, and SiftD.ai, in a deal that flew together over the last couple of weeks and closed on Monday, the company told TechCrunch. Terms were not disclosed for either deal. Antimatter, founded by security researcher Andrew Krioukov, raised $12 million led by New Enterprise Associates in 2022, according to PitchBook estimates. If tiny SiftD.ai had raised money, PitchBook wasn’t aware. SiftD.ai was so young, it had onlylaunched its product in November: an interactive notebook (like aJupyternotebook) intended to be a tool where people and agents worked together. The Databricks team knew the startup’s co-founder CEO Steve Zhang from his many years as chief scientist at Splunk (through 2021). He created the Search Processing Language while there. (His LinkedInalso says he was CTO of Astronomer,of the Coldplay CEO scandal, but left there in 2023 before founding SiftD.) Both of these acquisitions were of small startups — only a few people in SiftD’s case and less than 50 for Antimatter,according to LinkedIn. SiftD appears to be an acqui-hire. With Antimatter, Databricks probably gained some IP, too. Krioukov had demonstrated Antimatter’s tech onstage in2024 at RSA’s Innovation Sandbox Contest. Antimatter was working on a “data control plane” tool that allowed enterprises to deploy agents securely, while protecting sensitive data. While Databricks declined to say how many employees it acquired, it confirmed that the startups’ employees did join the company. Krioukov, who’s been at Databricks for months now, is leading the Lakewatch team. We asked Databricks if it was going to keep shopping for startups and a spokesperson essentially said, yes, that it continuously has its feelers out. “We’re always looking to what’s next — our goal is to stay ahead of the market and close gaps in what our customers need,” the spokesperson said.

View

Spotify tests new tool to stop AI slop from being attributed to real artists

At a time whenAI slopisflooding music streamingplatforms, Spotify is beta testing a new “Artist Profile Protection” feature that allows artists to review releases before they go live on their profiles. The idea behind the new tool is to give artists more control over which tracks are associated with their name on the streaming service. “Music has been landing on the wrong artist pages across streaming services, and the rise of easy-to-produce AI tracks has made the problem worse,” Spotify wrote in ablog post. “That’s not the experience we want artists to have on Spotify, and that’s why we’ve made protecting artist identity a top priority for 2026. Today, we’re announcing a first-of-its-kind solution to a problem that’s affected streaming for years.” Artists in the beta have the ability to review and approve or decline releases delivered to Spotify. Only the releases that they approve will appear on their artist profile, contribute to their stats, and show up in users’ recommendations. Spotify’s announcement comes a week after Sony Music said that it hasrequested the removalof more than 135,000 AI-generated songs impersonating its artists on streaming services. Spotify says that while open distribution has made it easier for independent artists to release music, it also creates opportunities for mistakes and bad actors. Tracks can end up on the wrong artist’s profile due to metadata errors, confusion between artists with the same name, or malicious attempts to attach music to an artist’s profile. “When that happens, it can impact your catalog, your stats, your Release Radar, and how fans discover your music,” Spotify explains. “We know how frustrating this can be for both artists and fans alike and one of the top requests we’ve heard from artists over the past year is that you want more visibility before music appears under your name.” Spotify notes that while the new feature isn’t necessary for every artist, it’s designed for artists who have experienced repeated incorrect releases, have a common artist name, or want more control over what appears on their profile. Artists who are included in the beta will see the feature in their “Spotify for Artists” settings on desktop and mobile web. If they turn “Artist Profile Protection” on, they’ll receive an email notification when music is delivered to Spotify with their name attached to it. From there, they can approve or decline the request.

View

Anthropic hands Claude Code more control, but keeps it on a leash

For developers using AI, “vibe coding” right now comes down to babysitting every action or risking letting the model run unchecked. Anthropicsaysits latest update to Claude aims to eliminate that choice by letting the AI decide which actions are safe to take on its own — with some limits. The move reflects a broader shift across the industry, as AI tools are increasingly designed to act without waiting for human approval. The challenge is balancing speed with control: too many guardrails slows things down, while too few can make systems risky and unpredictable. Anthropic’s new “auto mode,” now in research preview — meaning it’s available for testing but not yet a finished product — is its latest attempt to thread that needle. Auto mode uses AI safeguards to review each action before it runs, checking for risky behavior the user didn’t request and for signs of prompt injection — a type of attack where malicious instructions are hidden in content that the AI is processing, causing it to take unintended actions. Any safe actions will proceed automatically, while the risky ones get blocked. It’s essentially an extension of Claude Code’s existing “dangerously-skip-permissions” command, which hands all decision-making to the AI, but with a safety layer added on top. The feature builds on a wave of autonomous coding tools from companies like GitHub and OpenAI, which can execute tasks on a developer’s behalf. But it takes it a step further by shifting the decision of when to ask for permission from the user to the AI itself. Anthropic hasn’t detailed the specific criteria its safety layer uses to distinguish safe actions from risky ones — something developers will likely want to understand better before adopting the feature widely. (TechCrunch has reached out to the company for more information on this front.) Auto mode comes off the back of Anthropic’s launch ofClaude Code Review, its automatic code reviewer designed to catch bugs before they hit the codebase, andDispatch for Cowork, which allows users to send tasks to AI agents to handle work on their behalf. Auto mode will roll out to Enterprise and API users in the coming days. The company says it currently only works with Claude Sonnet 4.6 and Opus 4.6, and recommends using the new feature in “isolated environments” — sandboxed setups that are kept separate from production systems, limiting the potential damage if something goes wrong.

View

Kentucky woman rejects $26M offer to turn her farm into a data center

For generations, Ida Huddleston and her family have owned a farm in northern Kentucky. And they’ve turned down at least one multimillion-dollar offer to preserve it. Last year, a “major artificial intelligence company” offered them $26 million to sell part of their farm for a proposed data center,according to a recent report from WKRC. Huddleston and her family declined, saying they didn’t want a data center built near them or on any of their 1,200 acres of farmland outside Maysville, Kentucky. “They call us old stupid farmers, you know, but we’re not,” Huddleston, who is 82, told Local 12 WKRC. “We know whenever our food is disappearing, our lands are disappearing, and we don’t have any water — and that poison. Well, we know we’ve had it,” apparently referring to recentwater shortages and ground poisoningthat’s beenwidely reportedin landnear data centers. In an interview with the news station, Huddleston said she doubted the data center would bring jobs or economic growth to Mason County. “It’s a scam,” she said. The company, which WKRC did not name, revised its plans and filed a zoning request to rezone more than 2,000 acres in northern Kentucky, according to the report — meaning the AI firm may still build its data center next to Huddleston’s land.

View

Meet the former Apple designer building a new AI interface at Hark

A secretive AI lab founded by serial entrepreneur Brett Adcock shared new details about what it believes is a novel marriage of model-building and hardware design that will change how humans interact with intelligent software. The company said in a statement it would design multimodal end-to-end models, its hardware, and its interfaces in tandem to deliver a “seamless end-to-end personal intelligence product.” The system will have a persistent memory of your life and can listen, see, and interact with the world in real time. How that will be executed remains unclear outside the company, but Hark’s ambition is representative of Silicon Valley’s ongoing hunt for the killer app that will make AI a desired consumer product, not features kludged dubiously into existing digital platforms. “My view is simple: today’s AI models aren’t nearly intelligent enough, they feel quite dumb, and the devices we use to access them are fundamentally pre-AI,” Adcock wrote in a January internal memo shared with TechCrunch. “We’re moving toward a world that looks more like sci-fi characters Jarvis or Her, with systems that anticipate, adapt, and genuinely care about the people using them.” Details are intentionally sparse, but Hark points to Director of Design Abidur Chowdhury as a key hire. Previously an industrial designer at Apple credited with leading the design team behind the iPhone Air and other recent models, London-born Chowdhury left last fall after meeting with Adcock and buying into his vision for updating the way humans automate their lives. In an exclusive interview with TechCrunch, Chowdhury declined repeated invitations to spill the beans on Hark’s roadmap, only saying that the public can anticipate a first release of the company’s AI models this summer. Asked about different approaches to working and living alongside AI, the designer did offer a few clues. Loading the player… “What was very clear for me at the time is that the world is clearly changing, but we’re using the same devices … everything’s been designed around these existing platforms,” Chowdhury told. “Very few people are really going after what the future is. There’s so much that we could be doing if intelligence was at the base layer of everything we touched instead of becoming an app or a website at that upper layer.” Chowdhury points to the awkwardness of everyday tasks of filling out forms, sharing information between devices, or the mundane tasks of booking travel or planning home renovation. “Those are entire evenings of time where I have to plan … the anxiety of, you know, I spend my workday thinking about this in the back of my head, oh, I have to do this,” Chowdhury said. “We genuinely believe that all of the small tasks that pile up to be kind of gargantuan things today can be sort of automated from our lives.” Chowdhury says the company knows what it is building, but can’t yet say how users will experience it. His comments suggest that wearables, like Meta’s glasses, seem unlikely. “I’m not the biggest believer in a lot of the wearable AI platforms that people are talking about right now,” Chowdhury said. “I don’t think it’s appropriate to put a layer between humanity and the interfaces we use in the world. I have similar discomfort with pins, or that kind of stuff that is going around with cameras.” When generative AI first arrived on the scene, Chowdhury at first saw it as a flash in the pan, but successive generations of models convinced him that it would change his work. Hark, the word, means to pay attention, which Chowdhury says offers a thoughtful framing for the company’s mission. “Traditional user experience always is about finding the simplest thing for everyone,” he told TechCrunch. “The future user experience will be finding the right thing for each individual. And I believe that can happen. But it requires a lot of work.” The focus on elegance and simplicity for users echoes the high points of Apple’s product design, and naturally brings to mind Jony Ive, the legendary former Apple designer who is now developing AI native-hardware at OpenAI. A comparison that a Hark’s spokesperson declined to explore. Another parallel that comes to mind is how Elon Musk’s xAI’s work on advanced models dovetails with Tesla’s work on autonomous vehicles and humanoid robots. There is similar corporate synergy between Adcock’s humanoid robotics company Figure and the new AI labs. Hark’s models are already being trained on Figure’s robots, although it is not clear to what end. A person familiar with the companies’ plans says there is no intention to combine them. Hark employs 45 engineers and designers, including former Meta AI researchers and designers from Apple and Tesla, all of whom are working on the same campus that hosts Adcock’s other companies. Hark expects to begin using a new cluster of thousands of Nvidia GPUs in April. Now Hark, backed by $100 million in personal seed money from Adcock, will join the scramble for talent as the world’s biggest companies try to figure out the format that brings deep learning models into daily life — and at a time when frustration with the existing models for digital life is hitting a fever pitch. “It just feels like there’s an opportunity for better, and I’ve not felt like that since the iPhone came up,” Chowdhury said.

View

Doss raises $55M for AI inventory management that plugs into ERP

Enterprise resource planning (ERP) systems are often described as a company’s “central brain” because the software connects different departments — including finance, HR, and inventory — into a single database where everyone shares the same information. In recent years, a new crop of AI-powered ERP startups, such as Rillet and Campfire, has emerged hoping to replace legacy offerings like NetSuite. These companies claim that traditional ERPs are clunky, expensive, and time-consuming to implement. However, according to Doss co-founder and CEO Wiley Jones, many new AI ERPs lack robust inventory management, the process of ensuring that the data on physical goods remains synced with the accounting ledger. Doss claims to solve this by providing an AI-native inventory management layer that integrates with existing accounting systems, whether traditional ERPs or ones built by AI-based startups. On Tuesday, Doss announced that it raised a $55 million Series B co-led by Madrona and Premji Invest, with participation from Intuit Ventures. Other new and existing inventors in the round include Theory Ventures, General Catalyst, Contrary Capital, and Greyhound Capital. Doss, founded in 2022, originally focused on a core accounting product similar to those offered by AI-native startups like Rillet and Campfire. But last year, the startup decided instead of competing with these companies, “we would rather partner with them, and play a different game,” Jones told TechCrunch. Jones explained that AI-native ERP companies manage accounts receivable, accounts payable, and other finance functions, but most don’t offer procurement and inventory management that integrates with accounting workflows. “We’re building a lot of the traceability for the supply chain, but through the lens of plugging into a finance and accounting partner,” Jones said. The company’s main partners include Rillet and Campfire. Many clients also use Doss in conjuction with Intuit’s QuickBooks. “The reason that they work with us is that [physical goods management] is not something that they’re likely going to build as a core competency without putting in a lot of energy and effort,” Jones said. Doss’ core customer base consists of mid-market consumer brands, typically generating between $20 million and $250 million in top-line revenue. One such customer is Verve Coffee Roasters, a high-end specialty coffee brand. The startup sees itself as competing with traditional ERPs. But these players are not sitting ideal in the age of AI, either. NetSuite, for instance, has recently introduced its updated AI ERP. It also competes with other agentic procurement startups such asDidero. While Jones admits that selling two ERP systems, one for accounting and another for inventory management like Doss, “is a hard sell,” he says that legacy ERPs are so hard to implement that many customers are choosing to have two newer, AI-powered systems. “I think it’s going to be a very intense fight inside of mid-market that ultimately will be determined by whoever rebuilds their architecture to be most legible and usable for agents,” Jones said. Editor’s Note: The story corrected the list of Doss’ partners.

View

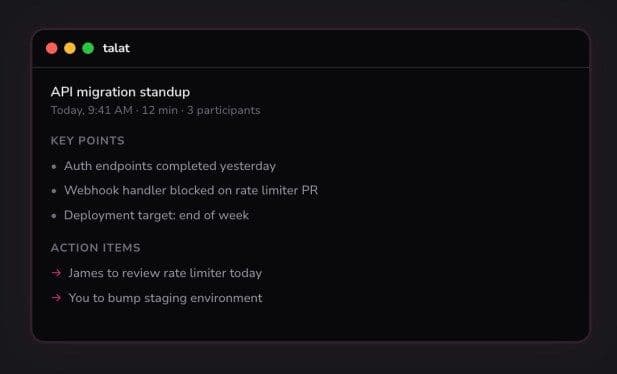

Talat’s AI meeting notes stay on your machine, not in the cloud

The AI-powered notetaking app Granola, valued at$250 million, has become a popular tool among tech industry foundersand VCs. But one developer believes there’s demand for a more private, local-only alternative that’s available for a one-time fee and without a subscription. That’s led to the creation of a new Mac app calledTalat. Yorkshire, England-based developerNick Payne, a self-described computer nerd, says the idea to build a local AI notetaker came about mostly because of a series of happy accidents. “I think Granola is awesome; it’s a shining example of what you can do with an Electron app [a framework for building desktop applications] given enough love and care,” he told TechCrunch. “When I first tried it, I was fascinated that it managed to record system audio on my Mac without recording video, which was the standard workaround at the time. That led to a ton of research, discovering a relatively new and poorly documented Apple API.” To make it easier to work with that API (Core Audio Taps, which lets developers tap into a Mac’s audio streams), Payne decided to create an open source audio library,AudioTee. “During that time, I was slowly piecing together a toolkit, but I never found anything that felt like it could stand on its own as a product rather than just a cool tech demo,” Payne said. “The state-of-the-art hosted transcription models — the same providers folks like Granola use — are incredible, and it’s viscerally cool to see your speech unfurled onscreen in near real time. But it always nagged me that the trade-off required providing not just my data, but my audio data; my actual voice,” he added. He then stumbled upon a software toolkit calledFluidAudio, a Swift framework that enables fully local, low-latency audio AI on Apple devices. It lets you run small, fast transcription models directly on the Mac’s Neural Engine — Apple’s dedicated hardware for AI processing. That was the piece that made Payne realize he could turn his research into an actual product — one where your audio never leaves your Mac and your transcripts aren’t stored on another company’s servers. Talat, which wasbuilt alongsidePayne’s longtime friend and former colleague Mike Franklin, is the result of Payne’s interest in the audio space. The result is a 20MB, one-time purchase that doesn’t require you to create an account or even share analytics data back with the developers. There are no ongoing fees, either. While some AI notetakers may have more bells and whistles, Talat offers a streamlined set of features. It captures audio from your computer’s microphone when you’re in meeting apps like Zoom, Teams, Meet, and others, and transcribes it in real time. The app tries to assign speakers in real time, but you can reassign them as needed. You can also take notes, plus edit, delete, or split transcript segments. When the meeting finishes, a local LLM generates a summary with key points, decisions, and action items. The notes, transcripts, and summaries are all searchable in Talat, too. In addition to the privacy angle, Payne said the goal is to give users more options. “We’re leaning into configurability and letting users control where their data goes: pick your own LLM, auto-export to [notetaking app] Obsidian, webhooks that push data out when a meeting finishes, anMCPserver,” which is a standardized way for AI tools to connect to outside data sources, “to pull it on demand,” he explained. Under the hood, the AI is a mixture — “mostly stitched together and abstracted behind FluidAudio,” Payne noted, which he credits with doing a lot of the heavy lifting. For the summarization piece, the app defaults to an Al model called Qwen3-4B-4bit, which can run on even fairly modest hardware. However, users can opt to switch that out to any cloud LLM provider of their choice, or they can choose between two Parakeet variants — speech-recognition models developed by Nvidia — or point it at Ollama (a tool for running AI models locally), giving them more control over the experience. In time, Talat will add support for more built-in choices and will have integrations for other apps, like Google Calendar and Notion. At launch, users with M-series Mac computers (those running Apple’s own processors, starting with the M1) can download the app and try it out for free with 10 hours of recordings before deciding to purchase. Talatisavailable for $49while in this pre-release version, which is still under active development. When the app hits a 1.0 release, the price will increase to $99. Payne and Franklin are bootstrapping Talat and plan to keep the core product a one-time purchase going forward.

View