Latest AI News

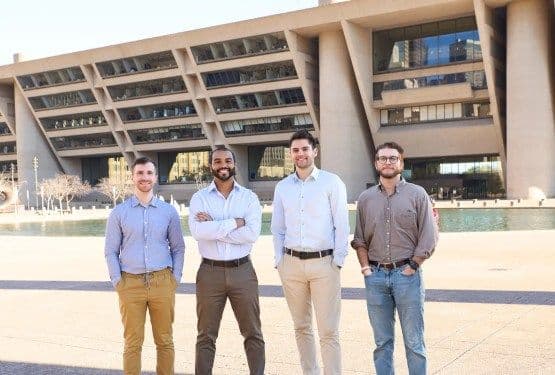

City Detect, which uses AI to help cities stay safe and clean, raises $13M Series A

City Detect, a company that uses vision AI to help local governments monitor the health of buildings and neighborhoods, announced on Friday a $13 million Series A round led by Prudence Venture Capital. The startup launched in 2021, and Gavin Baum-Blake, the remaining co-founder, serves as CEO. He said the company was founded in part because cities were struggling to deal with “urban blight and decay.” The idea was to use advanced computer vision and AI technology to help cities track and fix such problems. City Detect mounts cameras on public vehicles like garbage trucks and street sweepers, captures photos of surrounding buildings as those vehicles pass, then uses computer vision to analyze the images. It’s essentially a Google Maps Street View, but focused on ensuring buildings are up to code. “The problems could be graffiti, illegal dumping, litter that’s on the side of the road,” Baum-Blake told TechCrunch. Then, City Detect works with local governments to fix the issues, a process that usually involves local officials sending a crew out to clean everything up. Right now, tracking dilapidated buildings is very manual, so Baum-Blake considers his competition to be the “status quo.” “They’re able to do 50 per week,” he said of humans tasked with keeping track of decaying buildings, “whereas we’re able to do thousands per week.” The product, which Baum-Blake has patented, has some fun and essential features. The latter is that faces and license plates are always blurred for privacy reasons; the former is that City Detect’s technology can distinguish between street art and vandalism. It also helps governments track whether landlords are not properly maintaining their buildings. “We’re able to see if there’s structural roof issues or we’re able to identify if there’s been storm damage,” Baum-Blake continued. City Detect is in at least 17 cities and works with local governments in places like Dallas and Miami. The company has raised $15 million in funding to date and is a member of the GovAI Coalition (an AI governance collective), is SOC 2 Type II compliant (meaning it’s independently certified for privacy), and follows its own responsible AI policy. “We published our Responsible AI policy in response to a consortium of local governments that stated they were looking for clarity on what vendors were actually willing to commit to,” Baum-Blake said. “We committed to this policy so that our local government partners could know what to expect from us.” Baum-Blake said the new funding will be used to hire more engineers and advance some of the storm-detection damage technology. It also wants to expand throughout the U.S. “We are seeing huge efficiency gains across the departments that we work with, we’re seeing more instances of blight being solved without anyone receiving a citation, we’re seeing tires and litter, and illegal dumping being abated quicker and detected quicker,” he said. “It’s exciting to see technology-forward municipalities lean into predictive AI like City Detect’s models.” Zeal Capital Partners, Knoll Ventures, and Las Olas Venture Capital also participated in the round.

View

Claude’s consumer growth surge continues after Pentagon deal debacle

Claude’s daily active users are on the rise on mobile devices, as are its new app installs, following the company’sfallout with the Pentagon. After Anthropic CEO Dario Amodeirefusedto allow the government to use its AI systems for mass surveillance of Americans or to power fully autonomous weapons, the AI model provider behind Claude was marked as asupply-chain risk. However, Anthropic’s stance led many consumers to favor the model, data suggests. App intelligence providerAppfiguresreports that the U.S. downloads of Claude’s mobile app continue to surpass those of ChatGPT. The most recent figures from March 2 show Claude with 149,000 daily downloads, compared with 124,000 for ChatGPT, the company’s estimates indicate. While download figures offer a window into how many new users are installing the app for the first time, active users offer insight into how many people are actually using it. On that front, another market intelligence provider,Similarweb, found that Claude’s app on iOS and Android devices saw 11.3 million daily active users on March 2, up 183% from the start of the year when usage was around 4 million, and up from 5 million daily active users at the beginning of February. Claude’s growth put it ahead of other AI apps by daily active users, like Perplexity and Microsoft Copilot, but not other top rivals like ChatGPT. This is partially due to the fact that Claude’s jump in usage began later in the month, timed aroundthe news ofAnthropic’s tense negotiations with the Pentagon. If these trends continue throughout March, it could rank higher. Of course, ChatGPT still dominates the market by a significant factor, as its daily active users on March 2 were 250.5 million across iOS and Android. Similarweb also reports that Claude’s web traffic has been growing. While it’s still far behind other top AI providers in terms of web traffic, Claude’s web traffic was up 43% month-over-month in February, and up 297.7% year-over-year. At least some of this growth could be at the expense of ChatGPT, whose web traffic dropped 6.5% month-over-month during the same time period. Gemini also saw a slight bump of 2.1%, which is slower growth than in previous months. Anthropic itself has been touting Claude’s progress, noting that its AI chatbot isnow seeingmore than 1 million sign-ups per day after becoming theNo. 1 app on the U.S. App Storeover the past weekend — a position it still holds. The app is also No. 1 in 15 other countries, including Austria, Belgium, Canada, Finland, France, Germany, Ireland, Italy, New Zealand, Norway, Portugal, Singapore, Switzerland, the U.K. The company noted, too, that Claude has broken its own signup record every day since early last week in every country where Claude is available. ChatGPT’s app uninstalls, meanwhile, have been growing, an earlier report found. Anthropic said it doesn’t comment on third-party data, but a spokesperson noted that daily active users have more than tripled since the beginning of 2026, and paid subscribers have doubled. Updated with Anthropic’s comment after publication.

View

Anthropic vs. the Pentagon, the SaaSpocalypse, and why competitions is good, actually

The Pentagon has officiallydesignated Anthropic a supply-chain riskafter the two failed to agree on how much control the military should have over its AI models, including its use in autonomous weapons and mass domestic surveillance. As Anthropic’s $200 million contract fell apart, the DoD turned to OpenAI instead, which accepted and then watchedChatGPT uninstalls surge 295%. As the stakes keep rising, the question remains: how much unrestricted access should the military have to an AI model? On this episode of TechCrunch’s Equity podcast, hosts Kirsten Korosec, Anthony Ha, and Sean O’Kane dig into what startups should think about when chasing federal contracts, especially whennobody seems to know what to do with AIin Washington, and more of the week’s headlines. Listen to the full episode to hear more about: Subscribe to Equity onYouTube,Apple Podcasts,Overcast,Spotifyand all the casts. You also can follow Equity onXandThreads, at @EquityPod.

View

Anthropic’s Pentagon deal is a cautionary tale for startups chasing federal contracts

Loading the player… The Pentagon has officiallydesignated Anthropic a supply-chain riskafter the two failed to agree on how much control the military should have over its AI models, including its use in autonomous weapons and mass domestic surveillance. As Anthropic’s $200 million contract fell apart, the DoD turned to OpenAI instead, which accepted and then watchedChatGPT uninstalls surge 295%. As the stakes keep rising, the question remains: how much unrestricted access should the military have to an AI model? Watch as Equity hosts Kirsten Korosec, Anthony Ha, and Sean O’Kane break down what startups should know about chasing federal AI contracts, plus the week’s biggest tech stories, fromParamount’s Warner Bros. dealand MyFitnessPal’sCal AI acquisitiontoPinterest’s $1B AI push,Anduril’s $60B valuation, and whether the “SaaSpocalypse” is real. Subscribe to Equity onYouTube,Apple Podcasts,Overcast,Spotifyand all the casts. You also can follow Equity onXandThreads, at @EquityPod.

View

Anthropic’s Claude found 22 vulnerabilities in Firefox over two weeks

In a recent security partnership with Mozilla, Anthropic found22 separate vulnerabilitiesin Firefox — 14 of them classified as “high-severity.” Most of the bugs have been fixed inFirefox 148(the version released this February), although a few fixes will have to wait for the next release. Anthropic’s team used Claude Opus 4.6 over the span of two weeks, starting in the javascript engine and then expanding to other portions of the codebase. According to the post, the team focused on Firefox because “it’s both a complex codebase and one of the most well-tested and secure open-source projects in the world.” Notably, Claude Opus was much better at finding vulnerabilities than writing software to exploit them. The team ended up spending $4,000 in API credits trying to concoct proof-of-concept exploits, but only succeeded in two cases. Still, it’s a reminder of how powerful AI tools can be for open-source projects — even if they bringa flood of bad merge requestsalongside the useful ones.

View

Microsoft: Anthropic Claude remains available to customers except the Defense Department

Enterprises and startups that use Anthropic Claude through Microsoft’s products need not fear that the model will be ripped from their reach, Microsoft has confirmed to TechCrunch and other publications. Microsoft is the first big tech company to offer assurance that Anthropic’s models will remain available to its customers even though the Trump Administration’s Department of War — formally known as the Department of Defense — has escalated its feud with Anthropic. The Defense Department designated the American AI startup as a supply chain risk after the AI company refused to give it unrestricted access to its tech for applications the company said its AI could not safely support, such as mass surveillance and fully autonomous weapons. The supply-chain risk designation is typically reserved for foreign adversaries. For Anthropic, the designation means that the Pentagon can’t use the company’s products — and also requires any company or agency that works with the Pentagon to certify that they don’t use Anthropic’s models, either. Anthropichas vowed to fight the designationin court. Microsoft sells an array of products, from Office to its cloud, to many federal agencies including the Defense Department. A Microsoft spokesperson said that the company will continue making Anthropic’s models available within its own products and to Microsoft customers. “Our lawyers have studied the designation and have concluded that Anthropic products, including Claude, can remain available to our customers — other than the Department of War — through platforms such as M365, GitHub, and Microsoft’s AI Foundry, and that we can continue to work with Anthropic on non-defense related projects,” the spokesperson said in an email. CNBCfirst reportedon the comment. This echoes what Anthropic CEO Dario Amodei said in his statement vowing to fight the designation. “With respect to our customers, it plainly applies only to the use of Claude by customersas a direct part ofcontracts with the Department of War, not all use of Claude by customers who have such contracts,” Amodei said, adding, “Even for Department of War contractors, the supply chain risk designation doesn’t (and can’t) limit uses of Claude or business relationships with Anthropic if those are unrelated to their specific Department of War contracts.” In the meantime, Claude’sconsumer growth surge has continuedafter Anthropic refused to give in to the department’s demands.

View

Xiaomi Testing Experimental AI Agent Miclaw, Can Perform Complex Tasks Across Devices

Xiaomi, on Friday, announced a new artificial intelligence (AI) agent, dubbed miclaw, for its smartphones and smart home devices. The agentic AI assistant has now entered a limited closed beta phase, and the company is now testing how the agent performs in real-world scenarios. The Chinese tech giant said that the tool is designed to function as a device-wide assistant that can autonomously complete tasks across third-party apps and system features. Currently, there is no word on when the Xiaomi miclaw will be integrated into devices.

View

This AI-Powered Portable Device Claims to Detect Microphones and Jam Audio Recordings

A San Francisco-based startup is making some serious claims about its yet-to-be-released artificial intelligence (AI) audio jamming device. Dubbed Deveillance, the company is said to have developed a portable table-top anti-surveillance device called Spectre I, which can stop nearby microphones from recording audio. The startup claims that it achieves this by using power-efficient omnidirectional signals that are inaudible but can distort the audio recorded by devices within its range. Spectre I is currently available for pre-order, with shipping expected to start later this year.

View

After Europe, WhatsApp will let rival AI companies offer chatbots in Brazil

Meta is now allowing rival AI companies to provide their chatbots on WhatsApp to Brazilian users for a fee, a day after the company confirmed asimilar decision for users in Europe. Earlier this week, Brazil’s antitrust regulator CADEruled against Metaand rejected its appeal to block an earlier order to suspend its policy change that seeks to bar third-party AI chatbots on WhatsApp. “Upon reviewing the case, the CADE Tribunal determined that the necessary requirements for maintaining the preventive measure were present. According to the case rapporteur, Councilor Carlos Jacques, there is evidence of legal plausibility, considering the relevance of WhatsApp in the Brazilian instant messaging services market,” CADE’s rulingreads. The regulator added that banning third-party AI chatbots on WhatsApp “would not be proportionate” and could result in competitive harm. Meta said in response that it would let third-party AI chatbot providers use its WhatsApp Business API to offer their services on the app for a fee, wherever it is legally required to do so. The company will charge $0.0625 per “non-template message” in Brazil from March 11. “Where we are legally required to provide AI chatbots through the WhatsApp business API, we are introducing pricing for the companies that choose to use our platform to provide those services,” a Meta spokesperson said. Meta announced the policy changelast October, which spurred several antitrust investigations, particularly because the company offers its own AI chatbot, Meta AI, inside WhatsApp. The company has maintained that its WhatsApp Business API was not designed to cater to AI chatbots, and that they put a strain on the company’s system. While Meta is now allowing third-party chatbots in some regions because of regulations, developers tell TechCrunch that they are hesitant to resume services, saying the pricing set by Meta is high and could result in high costs. Zapia, one of the companies that filed the complaint with CADE in Brazil, welcomed the decision. “Competition and preventing powerful companies from limiting how innovation reaches users. At Zapia, we believe people should be free to choose the AI tools they use, and innovation only thrives when the platforms people rely on every day remain open. We will continue challenging these restrictions across the rest of Latin America, and we now look forward to seeing how Meta adapts its policies in Brazil to comply with the decision,” it said in a statement.

View

BEL & Bellatrix Aerospace Sign MoU to Develop Satellite Systems for VLEO Operations

The two companies plan to develop integrated satellite solutions that would support India’s strategic and civilian space missions.

View

Nielsen’s India GCC is Building the Operating System for Advertising Intelligence

“Over 80% of our global innovation is happening out of India.”

View

SoftBank Group Seeks Record $40 Bn Loan to Double Down on OpenAI Bet

SoftBank Group is pursuing a record $40 billion loan to deepen its investment in OpenAI, underscoring Masayoshi Son’s aggressive AI ambitions.

View